Please note that setting up URL Filters won't make any difference in terms of memory consumption - all the pages and resources will still be crawled, since the ones that have to be excluded may contain links to the other ones that don't fall under condition therefore, the URLs will only be excluded at the end of the crawling process. In the URL Parameters section, set the app to Ignore ALL Paramteres to prevent it from collecting endlessly-generated dynamic URLs.

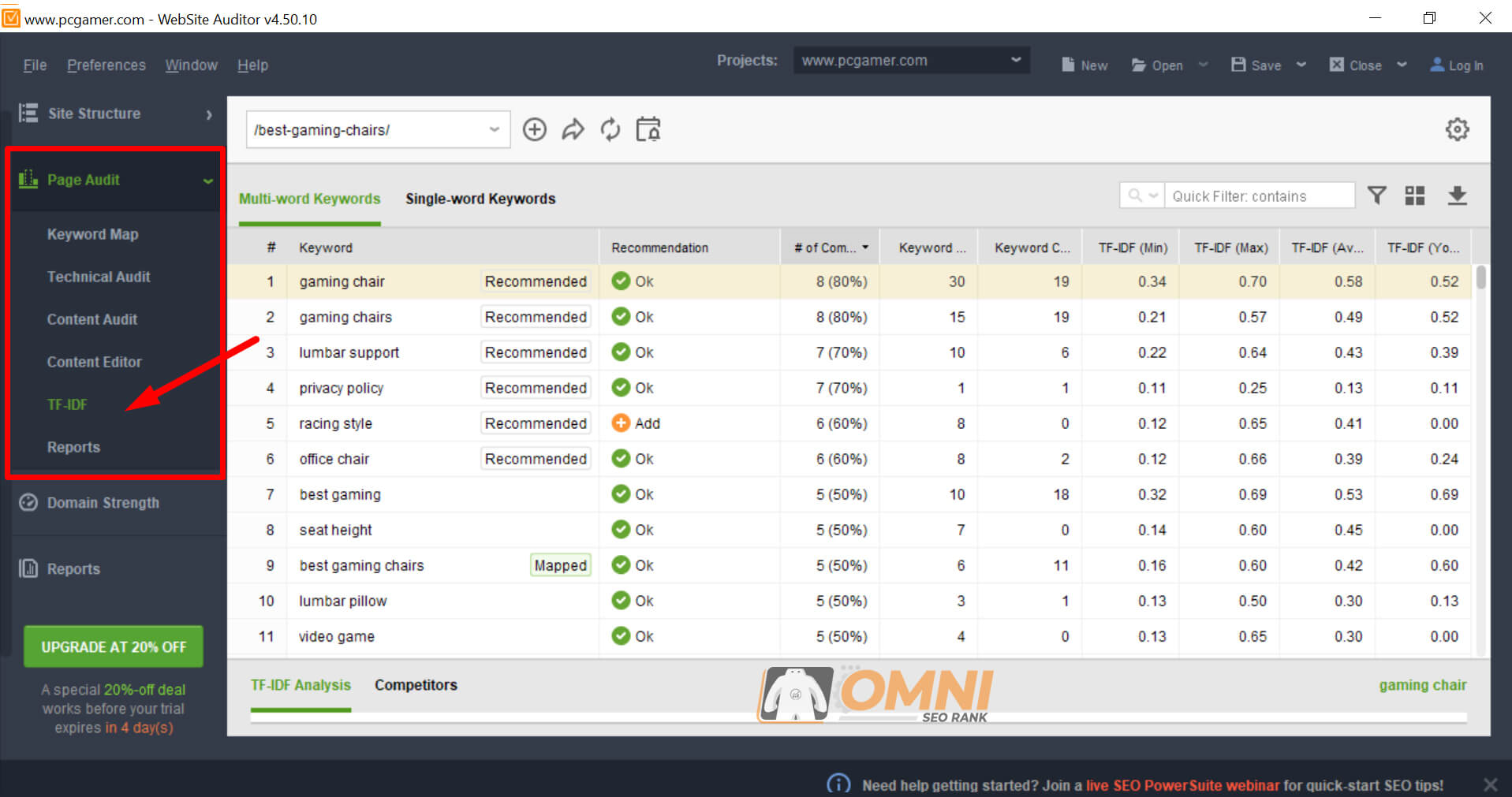

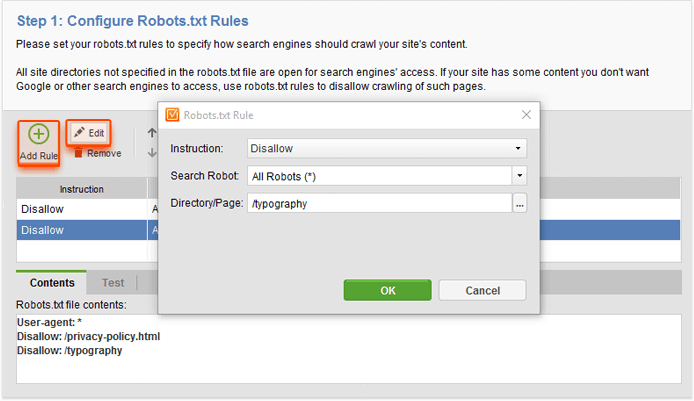

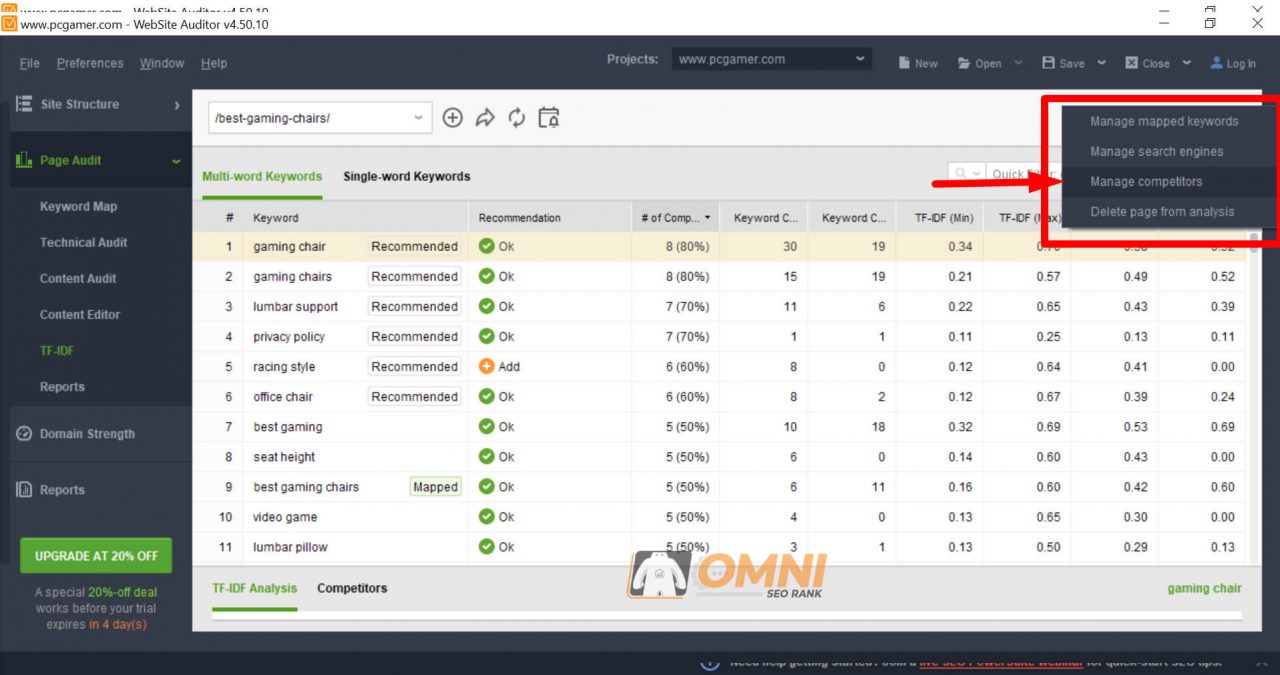

In the Filtering section, you can exclude some of the resources from being collected to your project (only leave HTML, or exclude certain resources: scripts, images, videos, PDFs, etc.).In the Robots.txt Instructions section, you can limit the scan depth (scanning a site 2-3 clicks deep, you should collect the most important/core pages).Upon creating or rebuilding a project, enable Expert options: Unlike the old-school metrics like keyword density, TF-IDF will accurately determine if there are keyword stuffing or under-optimization issues in your content or any given page element. To make it possible for the app to crawl a large website, you'll need to set reasonable limitations to the crawling itself using the options below: TF-IDF is now also used to calculate optimization metrics under WebSite Auditor's Page Audit dashboard. Some older PCs may only feature 2GB RAM - extending the default memory limit won't do in this case. The more RAM you have, the higher is the value you can set. You can prevent the app from running out of memory by increasing the default limit in accordance with your actual system capabilities.įor instance, extending the memory limit to 3500 MB (having 4GB RAM) will let the software handle sites with up to 50K pages. Yet there are several ways how you can overcome that. To prevent heavy load on an average system, RAM consumption in our tools is limited to ~1-4GB by default (depending on the OS and other peculiarities), regardless of how much RAM you individually have.įor that reason, if you're working on a heavy project, have a lot of unnecessary projects open, or multiple Java-based apps running, the apps may eventually run out of memory and freeze or crash. But I do not understand how to compute the tf-idf vector of the new document, because tf-idf dependes on the whole set, not on this single document.

Of course, I need to apply to it all the transformations I applied to the original set. Naturally, the app may happen to be resource-demanding. Now, let's suppose that I have a new document, and try to compute its distance to the documents in the Corpus. Same as each SEO PowerSuite app, WebSite Auditor is built on Java and has to process a serious amount of data. What do I do if WebSite Auditor freezes/runs out of memory?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed